This is a question that perfectly fits the “first principles” way of thinking. Most people talk about how powerful the Transformer is, but they rarely stop to ask: Why is it the Transformer? Why not something else?

To answer this, we need to peel back the layers and look at the very core of the problem.

What Exactly Are Large Language Models (LLMs) Doing?

Surprisingly, they are doing only one thing: predicting the next word.

You give the model all the previous words, and it guesses what comes next. It does this over and over again. Once it predicts enough words correctly, it becomes an AI that can chat with you! Whether it is GPT, Claude, or DeepSeek, they are all doing this single task.

So, what information does the model need to predict a word correctly?

It needs to know the “relationships” between that word and all the previous words. This is actually the essence of human language. When the characters “Apple is…” appear, a human speaker will naturally complete the sentence based on past experience, like saying “red” or “delicious.” We can generate this “next word” only because we have mastered the “relationships” between words through years of learning.

That’s it. The entire history of Large Language Models is just humans constantly trying to answer one question: How do we model this “relationship” effectively?

The First Answer: Remembering in Order (The RNN Approach)

The most simple and naive idea is to process words like a human reading a book—from left to right, one word at a time. You store the information you’ve read in your brain, and when you read the next word, you call up that memory.

This was the logic behind RNNs (Recurrent Neural Networks). Every time the model reads a word, it updates its “current memory” and passes it along like a rolling snowball.

Where is the problem?

Memory has a limit. You are trying to fit an unlimited amount of information from a sequence of any length into a fixed-size container (a vector). The longer the sentence, the more the early information gets squeezed out. By the time the model reads the 500th word, it has basically forgotten what the 1st word said.

This isn’t a problem you can solve by adjusting settings. It is a basic law of information theory: trying to fit infinite information into a finite space will always result in loss.

There was another fatal flaw: It had to be serial. The model couldn’t calculate step 4 until step 3 was finished. Even if you had 10,000 graphics cards (GPUs), they all had to wait in line. The computing power was completely wasted.

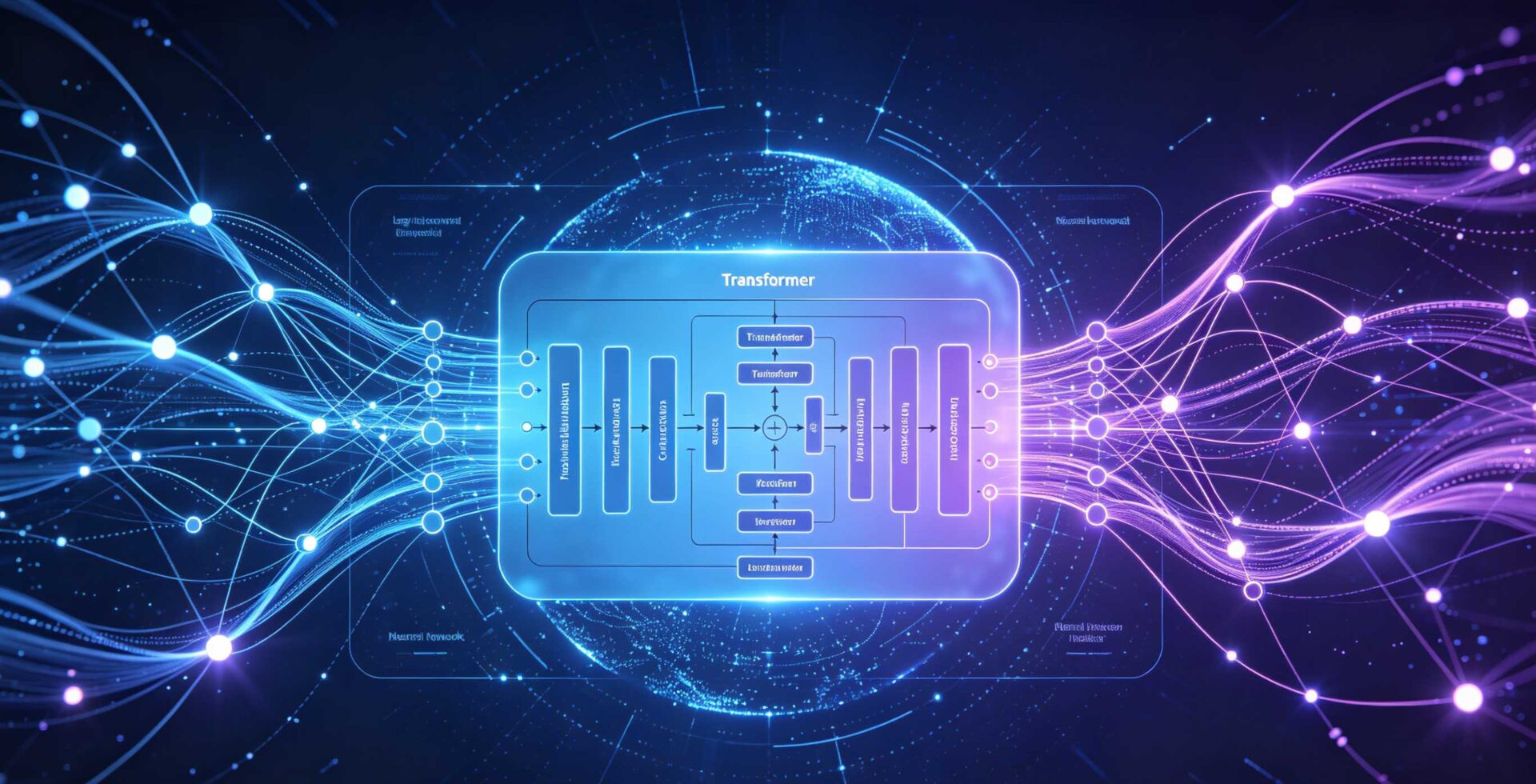

The Second Answer: Don’t Remember, Keep It All (The Transformer Approach)

This is what Transformer did. The authors made a decision that sounded silly but was incredibly correct: Since compressing information leads to loss, then don’t compress it.

They decided to keep the representations of all previous words. If you need one, just go get it directly. This is the Attention Mechanism.

How does it “get” the information?

Imagine every word carries three business cards:

- Q (Query): “What am I looking for?”

- K (Key): “What do I contain?”

- V (Value): “What actual information can I give you?”

The Query of the current word compares itself with the Keys of all other words. If the relevance is high, it takes more of that word’s Value. If the relevance is low, it takes less. It mixes all these Values together to get a new representation of the current word that combines global information.

All words do this at the same time. There is no dependency on order; everything happens in parallel.

What is the cost?

The calculation amount skyrockets. Every word has to calculate a relationship with every other word. This is the famous complexity problem.

But in exchange, it gained two fundamental advantages:

- No compression, no loss. The relationship between any two words is established directly. There are no middlemen distorting the message.

- Full parallelism. Thousands of GPUs can calculate at once. For the first time, computing power was truly fed. This provided the physical foundation for “more power creates miracles.”

The Aggressive Innovation: “Attention Is All You Need”

The story isn’t over. Before Transformers, people had used attention mechanisms, but they only used them as a patch to help RNNs.

The authors of the Transformer took a more aggressive step. The title of their paper was Attention Is All You Need. The key word isn’t “Attention”—it’s “All You Need.” You don’t need the complex loops of the past anymore.

This decision opened a massive door. Without the tricky recurrent structure, the whole network became pure, clean forward calculation. The path for training signals (gradients) became very smooth. You could add as many layers as you wanted and make the model as wide as you wanted. There was no structural ceiling on the parameter scale.

Then, Scaling Laws appeared: The more parameters, the more data, and the more computing power you threw at it, the better the results became. This could be predicted mathematically. This law could only exist if the architecture wasn’t a bottleneck. RNNs would break down if you stacked them too high; Transformers did not.

Transformer was the first architecture that allowed computing power to have an infinite positive feedback loop. The more you invest, the stronger it gets, with no ceiling. This is the root cause of why it became the foundation of LLMs. It’s not because the math was fancy; it’s because it was scalable.

A Hidden Strength: Simplicity Means Flexibility

There is one more thing that is truly underestimated. Transformer was not designed to learn specific language rules. It makes very few assumptions. It only says one thing: Any two positions can have a relationship of any strength.

The architecture doesn’t care if that “position” is a word in a sentence, a pixel in an image, an amino acid in a protein, or a frame in a video.

This is why ViT (Vision Transformer) uses it for images, AlphaFold uses it to fold proteins, and Sora uses it to generate videos. Because it assumes so little, it applies to almost everything. This is the underlying principle of why it swept across all types of data.

Will It Be Replaced?

Going back to the original question, Transformer has reached today because of one core judgment based on first principles: When modeling language relationships, you cannot have a “middleman” compressing information. Any two words must be able to talk directly.

If you accept this judgment, the attention mechanism is almost the only logical solution. Parallelism, scalability, and generalization are just natural results.

Will it be replaced? Yes.

But whatever replaces it will likely be trying to solve the same problem—just finding a way to do it that isn’t so expensive.

A Note on History: The Match and the Gunpowder

Transformer didn’t become the foundation of LLMs just because it was smart. “Timing” and “Environment” were also essential.

Timing: GPU computing power exploded between 2017 and 2020, just enough to feed Transformer’s hunger for parallel calculation. If this had been 2010, the same architecture would have been too heavy to run.

Environment: The internet had accumulated decades of massive text data, which happened to be available for training in this era. Without data, even the best architecture is an empty shell.

The success of Transformer was the result of architecture innovation, a computing revolution, and the data dividend all colliding at the same point. This isn’t to belittle it. It is to say that true revolutions in technology history are never just the invention of one genius. They happen when countless conditions become ripe, and one design happens to be the match that lights the gunpowder.

Transformer was that match.