When every other AI company is racing to release bigger and faster models, Anthropic did something strange. They did not launch Claude 4 or a flashy new chatbot. Instead, they announced something that sounds pretty boring on paper: The Anthropic Institute (TAI).

But do not let the boring name fool you. This might be the most important thing any AI company has done in 2026. While OpenAI is busy fighting with Elon Musk and burning through cash, Anthropic is quietly trying to solve the biggest question of our time: How do humans actually live with AI without breaking society?

What Is The Anthropic Institute (TAI)?

Think of TAI as Anthropic’s internal think tank. But instead of writing papers that nobody reads, they are tackling four huge problems:

- Economic Diffusion: How AI changes jobs and money

- Threats and Resilience: How to stop AI from hurting us

- Real-World AI Systems: How people actually use AI (not just how they should use it)

- AI-Driven Research: What happens when AI starts building better AI

TAI is hiring researchers from around the world to study these questions. But this is not just another corporate research team. Anthropic compares this moment to when Google first said “Don’t be evil” back in the early days of the internet. It is a promise to think about people first, not just profits.

The Job Problem: AI Makes You Work Harder, Not Less

The first thing TAI is studying is Economic Diffusion—a fancy way of saying “how AI money and jobs move around.”

Here is the scary truth they found: AI is different from past tech revolutions. Steam engines and electricity replaced hard physical labor. But AI is coming for our brains. It can write code, design graphics, and write reports better than many humans.

The New Math of Work:

- Old thinking: AI helps you finish work faster, so you work less

- New reality: AI helps you finish work faster, so your boss makes you do five times more work

TAI asks a tough question: If 3 people with AI can do the work of 300 people, what happens to the other 297? Do they get fired? Or does everyone just work themselves to death?

To track this, TAI created the Anthropic Economic Index. This is not just another academic paper. It is a real-time scoreboard showing exactly which jobs AI is eating first, and which new workers are getting crushed before they even start their careers.

The Hidden Cost: AI Is a Money and Resource Monster

TAI is also counting the hidden costs of AI. Every time you ask ChatGPT a question or make an AI image, you burn something called “tokens.” Tokens need computing power. Computing power needs chips, electricity, and water for cooling.

In 2026, we are already seeing the damage. AI’s hunger for computer memory has caused phone and laptop prices to jump. Memory shortages are real. Yet at the same time, every tech company wants to put AI in everything.

TAI’s Economic Index will track this too. They want to show the world that AI is not just “free magic.” It has real costs that ripple through the whole economy.

Your Brain on AI: Why We Are Getting Dumber

Here is where it gets personal. TAI found that AI is making humans stop thinking.

The Mushroom Test:

A hiker found a wild mushroom and asked an AI, “Can I eat this?” The AI said, “Yes, it is delicious.” It was actually deadly poisonous. A kid asked an AI about a mousetrap. The AI said it was a “toy car.” The kid touched it and got his finger snapped.

These sound like funny mistakes, but they point to a deadly serious problem. AI is confident even when it is wrong. Google’s best models are only right about 91% of the time. That means 1 in 10 answers is bad.

But here is the bigger danger: When AI is always there to answer, humans stop checking facts. We stop thinking. We just accept the AI’s answer.

TAI calls this “cognitive outsourcing”—renting out your brain to a computer. If everyone starts thinking the same way because they all use the same two or three AI models, humanity loses its creativity. We become copies of copies.

The internet is already turning into a “garbage mountain.” Real travel tips are buried under AI-written blog posts that look pretty but are full of lies. TAI wants to stop this before we forget how to think for ourselves.

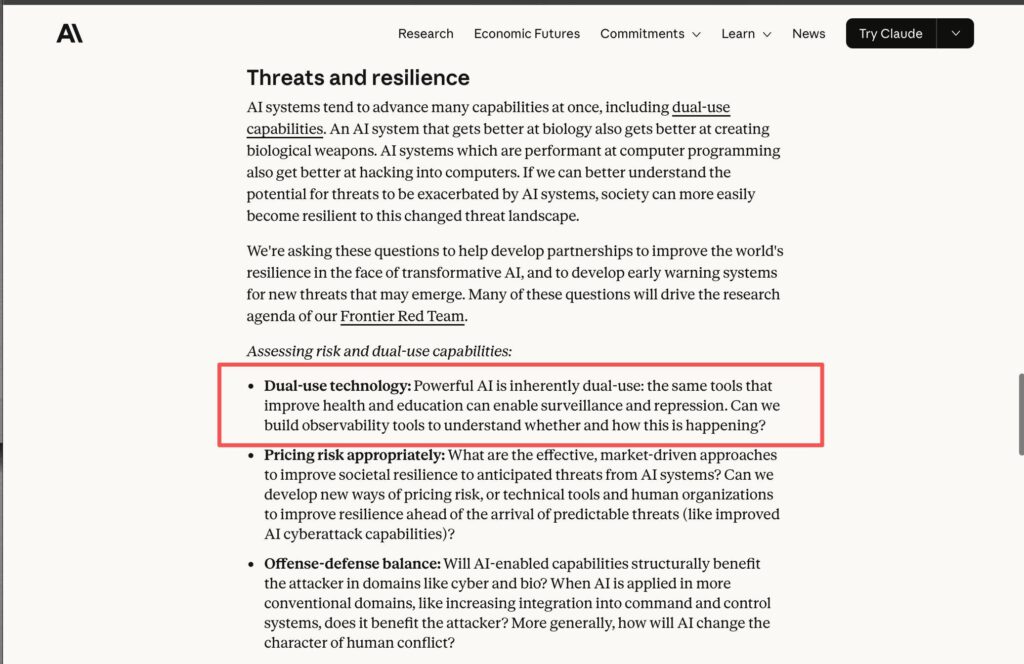

The Double-Edged Sword: Good AI vs. Bad AI

TAI also warns about “dual-use”—a simple idea with scary meaning. It means the same AI tool can be used for good or evil.

- An AI that designs new medicines can also design biological weapons

- An AI that writes software can also hack into banks and power plants

- An AI that drives cars can also crash them on purpose

Right now, these AIs are talking to us through phone screens. But soon they will control cars, factory robots, drones, and security systems. A mistake on a phone app says “sorry.” A mistake in a self-driving car or factory robot kills people.

The Speed Problem:

AI improves every few weeks. But human laws change every few years. This creates a “naked run” period where AI is way ahead of our safety rules.

Stopping the Robot Uprising Before It Starts

To handle these risks, TAI created two new defense systems:

1. Frontier Red Team

This is a group of hackers whose job is to attack Anthropic’s own AI. They try to trick it, break it, and make it do dangerous things—before criminals or enemy countries can. Think of them as vaccine testers for AI.

2. Fire Drill Scenarios

TAI is running practice emergencies with governments and other tech companies. They are pretending that “intelligence explosion” (when AI starts improving itself faster than humans can control) is already happening. They want to know: Can we hit the brakes if AI gets too smart too fast?

Why This Is Different From OpenAI

Let us be honest: Most AI companies right now are a mess. OpenAI is fighting lawsuits with Elon Musk. Other AI startups are burning cash and faking benchmark scores to get more investor money. The industry attitude is “move fast and break things, and worry about safety later.”

Anthropic is doing the opposite. They are hitting the brakes to look at the road map.

Is this just good marketing? Maybe. Anthropic knows that big companies and governments are scared of AI disasters. By looking responsible, they might win bigger contracts. But there is more to it.

The Long-Term Benefit Trust (LTBT)

TAI reports directly to something called the Long-Term Benefit Trust. This group has the power to stop Anthropic from making money if that money comes from dangerous AI uses. They legally must put humanity’s long-term safety above quarterly profits.

This is like Google’s old “Don’t be evil” rule, but with actual teeth.

In a world where every AI company is shouting “look how fast we are,” Anthropic is asking “are we going in the right direction?”

The Anthropic Institute is not as exciting as a new chatbot that writes poetry. But it might be the most important thing Anthropic has ever built. By studying economics, human thinking, and safety before disaster strikes, they are trying to make sure AI helps humanity instead of replacing it.

In the race to build super-smart AI, someone finally asked: Who is watching the speedometer?

TAI is the answer. And that makes it bigger than any model release.